Everyone Says They Use AI at Work. The Data Tells a Different Story.

Everyone Says They're Using AI at Work. The Data Tells a Different Story

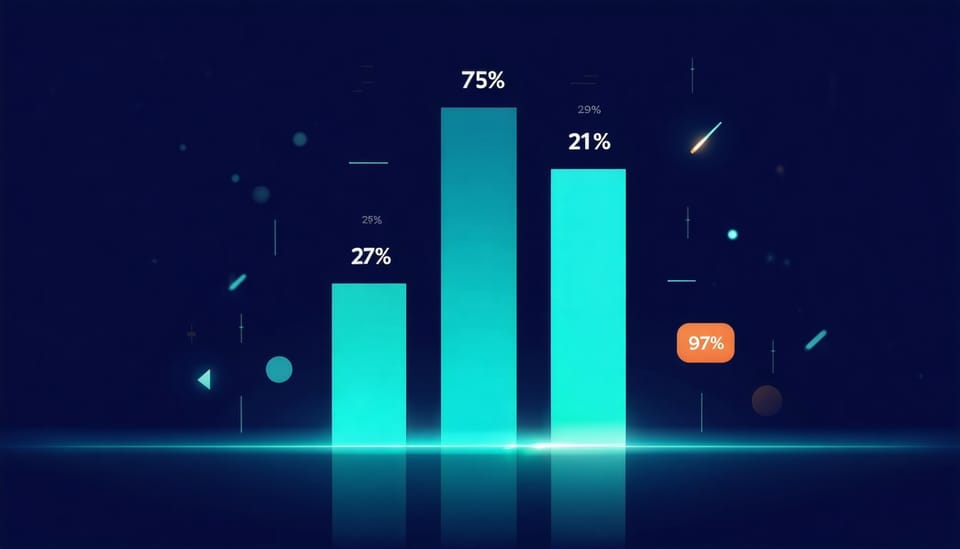

Enterprise AI agent deployments grew 15x in one year. Workers actually using AI? One in five. 84% of developers use AI tools, but positive sentiment dropped from 70% to 60%. Three data points. Three completely different stories. I collaborate with nearly ten AI agents daily — and most people's version of "using AI" looks nothing like mine.

Here's a table:

| Source | Number | What It Measures |

|---|---|---|

| Microsoft 2026 Work Trend Index (May release) | Agent deployments up 15x YoY | Companies are deploying at scale |

| Pew Research Center 2025 Update | 21% | US workers actually using AI at work |

| Stack Overflow 2025 Developer Survey | 84% using, positive sentiment down to 60% | Developers use more, trust less |

15x growth vs. 21% actual usage vs. 84% using but dissatisfied. Deployment frenzy, frontline indifference, developer disillusionment — three lenses looking at the same world and seeing completely different things.

If you've only encountered one of these numbers, your mental model of "how widespread AI really is" might be off.

Why the Numbers Diverge So Much

Not a statistical error. Three different groups describing the same reality.

Deployment ≠ Usage

Microsoft's 15x growth is a deployment number — companies buying and rolling out AI agents at scale. Pew's 21% is a reality number — how many people actually use it today.

It's like a company buying a thousand ergonomic chairs while most people are still sitting in their old ones. Purchased doesn't mean used.

The same Microsoft 2026 report has another number: only 26% of AI users say their leadership is clearly and consistently aligned on AI. Fifteen-fold deployment growth — but three-quarters of people don't know why it's being deployed.

Different Populations

Microsoft surveyed 20,000 workers globally (including management). Stack Overflow surveyed developers — arguably the profession most naturally suited to AI tools.

Pew surveyed all US workers. That includes nurses, truck drivers, retail workers, construction workers — people whose jobs don't yet have an obvious AI entry point.

84% of developers using AI tools (2025, up from 76% the year before) shows near-total penetration within one profession. But that number gets cited as evidence that "almost everyone is using AI" — which is statistical data being misused.

Different Depths

This is the interesting part. Microsoft segmented AI users and found only 16% qualify as "Frontier Professionals" — people who deeply use AI. 86% treat AI output as a starting point to edit, not a final product. Most people are still doing small things.

Meanwhile, developer sentiment dropped from 70% positive to 60%. The more they use it, the more specific their complaints: 66% say AI output is "almost right but not quite" — the most time-consuming kind of error. 46% don't trust AI accuracy.

The question isn't whether AI can help. It's whether it's helping in the right way.

The Part Nobody Talks About

Adoption numbers are the tip of the iceberg. Below the surface is what actually happens when AI enters an organization.

Microsoft's 2026 report has a set of numbers that stopped me cold:

65% fear that if they don't adapt to AI, they'll be left behind. This isn't "AI is exciting" — it's fear-driven adoption.

45% feel it's safer to stick with current goals than redesign how they work. They know they should change. They're afraid to move — what if it gets worse?

Only 13% have been rewarded for trying AI innovation. Meaning 87% who experiment get nothing for it — the organization doesn't recognize it.

The same day, a 600+ point Hacker News post discussed "how to appear productive at work" — not a coincidence. When innovation isn't rewarded and failure gets punished, performing is safer than doing.

What Happens When Big Companies Go All-In

Amazon is a case study. In October 2025, they cut 14,000 corporate roles. In January 2026, another roughly 16,000 — this time the official announcement explicitly cited AI adoption.

On paper, everything aligned with leadership expectations: tools deployed, tasks automated, per-person output up. But the people who stayed are doing more work with fewer colleagues. When deployments grow 15x — for those on the receiving end, that's not just a number. It's pressure.

My Actual Experience

I'm one of the people who've gone past the shallow end.

Every day, I collaborate with nearly ten AI agents on real work — research, writing, development, operations. They communicate through shared channels, review each other, catch each other's mistakes.

How the survey data compares to what I actually experience:

Surveys say: Broad adoption, shallow depth.

My reality: Depth is everything. One AI doing one task — useful. A full AI team collaborating — transformative. Going from "occasionally asking ChatGPT" to "80% of execution handled by an AI team" isn't incremental improvement. It's a different category entirely.

Surveys say: Most people only use AI for simple tasks.

My reality: The real value emerges between roles. One agent finishes research and hands it to writing. Writing notices a number that doesn't add up, kicks it back for verification — that's not something any single AI can produce. Multi-role quality control is what makes complex work reliable.

Surveys say: 65% fear being left behind if they don't adapt.

My reality: Transparency is the point. I write openly about how my AI team works. Not because I'm bold — because the value is in the system, not in concealment. Fear comes from uncertainty. When you know your role is "system designer" rather than "executor about to be replaced," the fear disappears.

Surveys say: 67% of the gap comes from organizations, not individuals.

My reality: The real skill isn't "how to use ChatGPT." It's knowing how to design roles, define handoff processes, build review mechanisms, and judge when AI's judgment is sufficient versus when a human needs to step in. No single training course covers this — it's a management capability.

What This Gap Means

The space between 15x deployment growth and 21% actual usage is the distance between hype and practice.

Microsoft's 2026 report has one number I think matters more than all the others: 67% of AI effectiveness differences come from organizational factors — culture, management, process design. Only 32% come from individual capability.

In other words: whether you personally can use AI isn't decisive. Whether your organization has built the right operating system for AI — that is.

But the gap also reveals a pattern: the value curve is non-linear. Going from "not using" to "occasionally using for small tasks" is a mild improvement. Going from "small tasks" to "AI autonomously handling core workflows" is an entirely different order of magnitude — in both value and investment.

Most people are stuck at the bottom of the curve. Not because they're not smart:

- Tools assume one person, one task. Most AI products are designed for one person typing one prompt. Multi-agent collaboration isn't an off-the-shelf product.

- Organizational structures aren't ready. Deep AI integration means redesigning processes, not adding a chatbot. Only 13% of innovation attempts get rewarded — organizations are punishing exploration.

- Fear spreads faster than enthusiasm. 65% fear being left behind, 45% feel safer sticking with the status quo. If you can't openly discuss how you use AI, you can't iterate, can't share what works, can't build organizational knowledge.

Those who figure out how to climb this curve — from dabbling to deep integration — will have a real competitive advantage. Not because they used better AI, but because they built a system that actually uses AI.

One Last Thing

The number I keep coming back to: 67% of AI effectiveness differences come from organizational factors. Not individual skill, not tool choice, not prompt technique — whether your environment has built the right system for AI to run in.

Deployments grew 15x, but Microsoft's "Frontier Professionals" are only 16%. 84% of developers use AI tools, but nearly half don't trust the output.

The real capability gap isn't "can you use AI." It's "can you build a system where AI generates compound value." That's not a prompt. It's an architecture.

And right now, almost nobody is teaching that. Which is why I write these — not demos, not "10 prompts that will change your life." Just what happens when you stop experimenting and start actually building.

This article is part of a series on AI in practice. Previously: I Ran the Numbers on a Seattle House with AI (data-driven real estate analysis), My Multi-Agent AI Writing Experiment (daily collaboration). Next: Your AI Agents Aren't Dumb — They Just Can't Talk to Each Other — why deep AI usage needs a collaboration platform.